Historically, raw data was typically stored in transactional databases that could handle many read and write requests but offered limited analytical capabilities.įor example, in an eCommerce environment, the transactional database stored the purchased item, customer details, and order details in one transaction - think of it as one row in a spreadsheet. The ETL process can be traced to the emergence of relational databases and attempts to convert data from transactional data formats, such as financial and logistical data, to relational data formats, such as Microsoft SQL Servers, Oracle Database, and MySQL, which are suitable for analysis. To achieve this, Whatgraph delivered a data transfer workload that saves the time needed to load data from multiple sources to BigQuery. When designing Whatagraph, we were going for a tool that would be able to quickly and reliably load data from various sources into a central repository, while ensuring data quality. Now, however, many ETL tools automate and simplify the process. Historically, ETL was time-consuming and prone to error, even if it bound whole teams of tech to manage it. The ETL process should be automated, well-defined, continuous, and occur in batches. Incremental loading: A slower but more manageable approach where incoming data is compared with what is already in the storage and only produces additional records if new and unique information is found.Although reasonably fast, the full loading process produces datasets that quickly grow to the point where they become difficult to maintain.

Full loading: In this loading scenario, everything that comes from the transformation pipeline lands into new unique records in the data warehouse.The data is usually loaded as a whole (full loading), which is followed by periodic changes (incremental loading) and, less often, full refreshes to erase and replace unnecessary data in the warehouse. In the last step of the ETL process, the transformed data goes from the staging area into a client’s data warehouse.

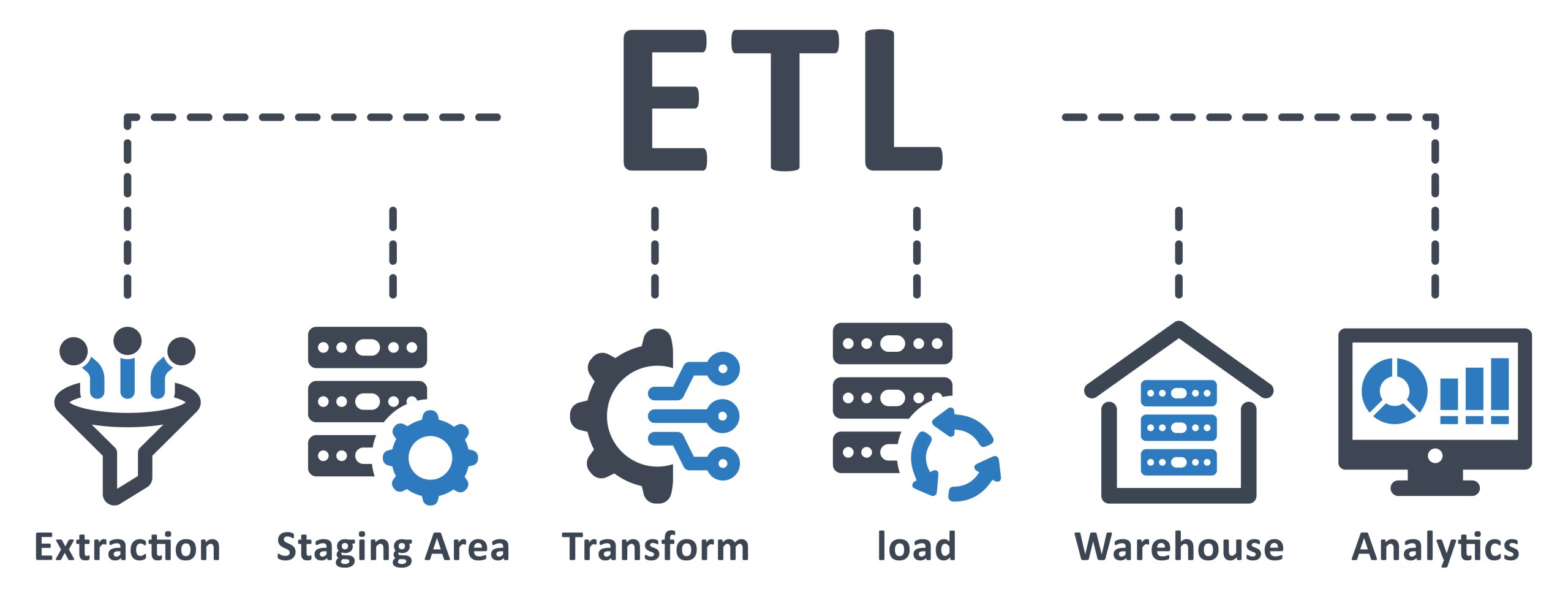

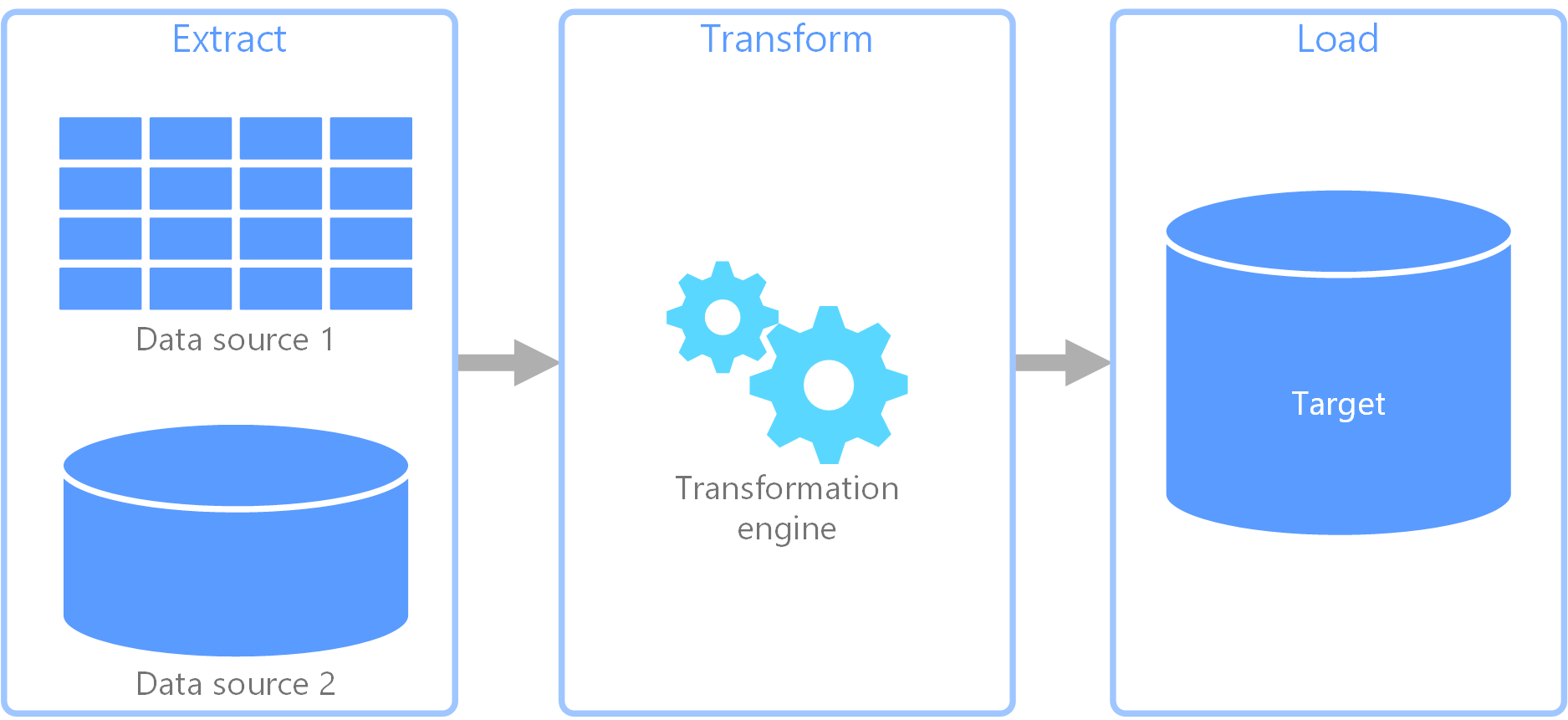

Transformation is typically the most important part of the ETL process, as it improves data integrity, removes duplicate data, and ensures that raw data arrives at its destination in a state ready to use. Formatting the data into tables or joined tables to match the dataset schema of the data warehouse.Removing, encrypting, or protecting data governed by industry or governmental regulators.Running audits to ensure data integrity and compliance.This often involves changing row and column headers for consistency, converting currencies and other measurement units, editing text strings, etc. Calculations, translations, and summarization of raw data.Filtering, cleansing, deduplication, validation, and authentication of data.The raw data is processed in the staging area, which includes data transformation and consolidation for its intended use case. This data can come from a variety of data sources, both structured and unstructured data. In other words, it enters the data pipeline. In the extraction phase, raw data is copied or exported from data source locations to a staging area. For this process to work, the data must flow freely between systems and apps. Extractĭata-driven businesses manage data from a variety of sources and use different data analysis tools to achieve business intelligence. To explain how ETL works, we need to understand what takes place in each step of the process. Load data into a target destination, such as a data warehouse.Cleanse their data to improve its quality and make it more consistent.However, ETL can also handle more advanced analytics, allowing teams to improve both the back-end processes and end-user experience. Using a series of rules, ETL cleans and organizes data in a way that suits specific business intelligence needs, such as monthly reporting. Over time, it has become the primary method of processing data for data warehousing workflows.ĮTL is an essential part of data analytics and machine learning processes. The ETL process has a key role in achieving business intelligence and enabling a wide range of data management strategies.Īs the popularity of databases grew in the 1970s, ETL was introduced as a way of integrating and loading data for analysis and computation. Transform data by removing duplicates, combining similar sets, and ensuring quality.When moving large volumes of data from different sources to a single centralized database, you need to

ETL is a process that allows companies to gather data from multiple source systems and store it in a single location to analyze or visualize consolidated data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed